How to Choose the Right AI Automation Partner (Without Costly Mistakes)

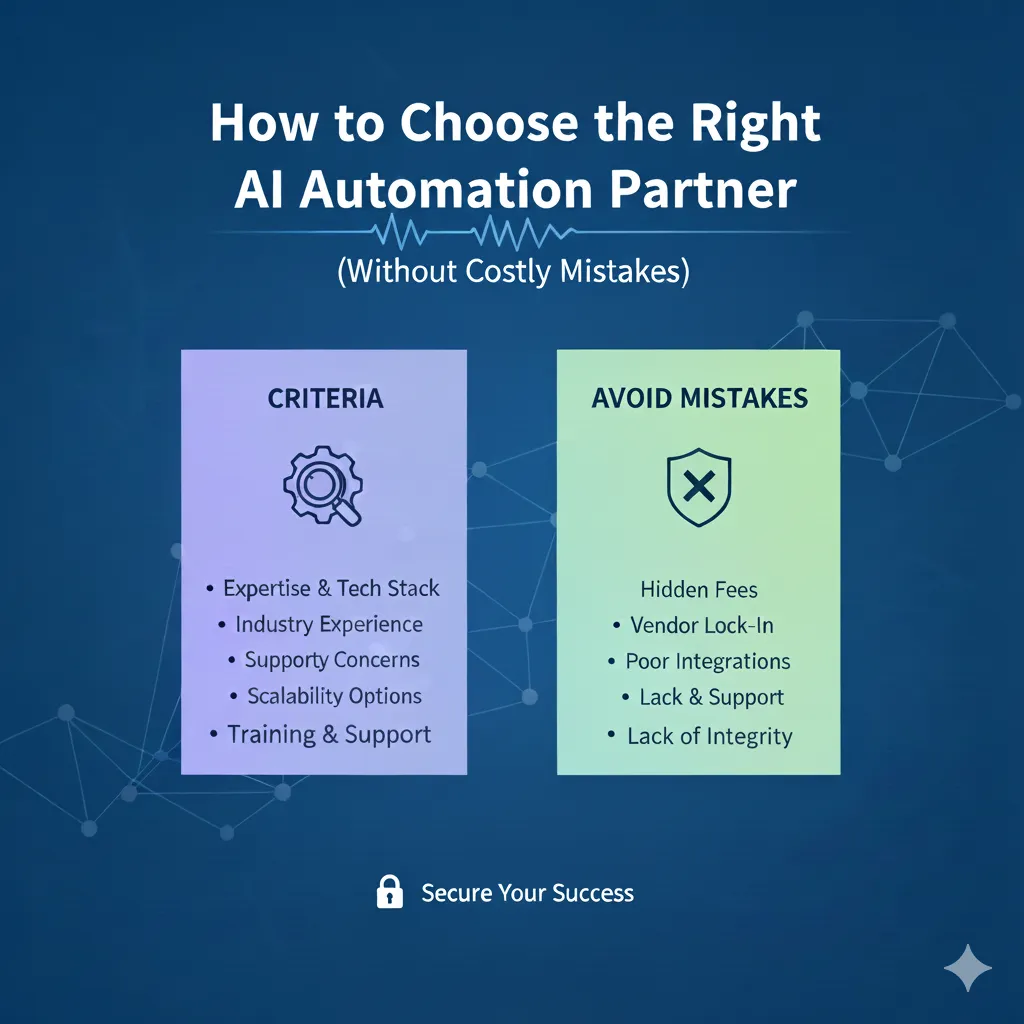

Picking an AI automation partner is a strategic decision that shapes how your organization captures value from AI. This guide cuts through the noise: it identifies the selection pitfalls, shows how to evaluate vendor capability, and highlights the must-have checks: data security, integration, cost clarity, and more so you can choose a partner who delivers measurable results.

Realizing AI’s promise depends on recognizing and managing the key factors that make implementations succeed.

Critical Success Factors for AI Implementation AI brings big upside, but that upside only materializes with careful implementation. Knowing which factors drive success helps organizations protect value and avoid costly missteps during deployment. An evaluation of the critical success factors impacting artificial intelligence implementation, MI Merhi, 2023

What Are the Top Challenges in Selecting an AI Automation Partner?

Finding the right partner requires navigating several common but consequential challenges. Being deliberate about these issues will help you match a vendor’s capabilities to your business priorities. The key challenges to watch for are:

Vague Objectives : Without clear, measurable goals it’s easy to pick a solution that doesn’t move the needle.

Technical Expertise and Industry Experience : You need partners who can show they’ve solved problems like yours; both technically and within your sector.

Data Security and Governance : Protecting sensitive data and meeting compliance requirements are non‑negotiable for production AI.

Identifying and addressing these items early makes selection faster and reduces the risk of project failures later on.

Beyond these basics, AI partnerships raise deeper considerations around scale, interpretability, and human–machine collaboration that also deserve attention.

Key Challenges in AI Automation Partner Selection Even as capabilities improve, persistent challenges remain: scaling solutions, integrating human oversight, protecting privacy, explaining model behavior, and adapting to shifting data patterns. Leveraging AI for Better Data Quality and Insights, 2025

How Do Vague Objectives Impact AI Partner Selection?

Vague objectives make it hard to compare vendors or measure success. If you haven’t defined the outcome you want; whether it’s cycle time reduction, higher accuracy, or cost savings- vendors will present generic solutions and estimates. Start with specific, measurable goals and use them to frame your vendor evaluation and contracts.

Why Is Assessing Technical Expertise and Industry Experience Crucial?

Technical skill alone isn’t enough. The best partners combine technical depth with domain experience so they can translate your business challenges into reliable, production‑grade systems. Look for concrete examples: architecture choices, data pipelines, performance metrics, and case studies from similar industries.

Prioritize vendors who can demonstrate both the technical stack you need and practical lessons learned from comparable projects.

How to Evaluate AI Automation Vendor Expertise and Industry Experience?

Use a structured checklist when vetting vendors. Below are practical steps that reveal capability and fit:

Review Case Studies : Examine end‑to‑end outcomes, not just marketing claims, look for quantified impact and technical detail.

Evaluate Technical Skills : Match the vendor’s engineering stack and modelling approach to your requirements; ask for code samples, architecture diagrams, and references.

These checks help you move from promises to proof and reduce execution risk once a project starts.

What Technical Skills Should You Look for in an AI Partner?

At minimum, a capable AI partner should demonstrate strength in:

Machine Learning : Practical experience with relevant algorithms, model validation, and production deployment.

Data Analysis : Ability to profile, clean, and transform messy enterprise data into reliable inputs for models.

Software Development : Production engineering skills for APIs, monitoring, testing, and maintainable code.

Together, these competencies ensure solutions are accurate, maintainable, and operational at scale.

How Do Industry-Specific Case Studies Inform Your Decision?

Case studies show how a vendor handled constraints you’ll likely face: regulatory boundaries, legacy systems, or domain‑specific edge cases. Look for narratives that include objectives, approach, obstacles, metrics, and what changed after deployment. Those details reveal whether the vendor truly understands your environment.

What Are the Key Data Security, Quality, and Governance Considerations?

Data is the backbone of AI. Rigor around security, quality, and governance determines whether your models are reliable and compliant in production.

These are the core areas to verify:

Data Quality : Ensure processes exist for cleansing, labeling, versioning, and monitoring data used in training and inference.

Governance Practices : Confirm policies for access control, lineage, retention, and regulatory compliance.

Prioritizing these controls reduces model drift, protects privacy, and supports auditability.

How Does Data Quality Affect AI Implementation Success?

Poor data produces poor models. High‑quality, representative data improves accuracy and reduces bias. Build repeatable pipelines for validation and cleansing, and include ongoing monitoring so you detect data shifts before they impact decisions.

Investing in data hygiene upfront saves time and preserves model value over the long term.

What Data Security Measures Ensure Responsible AI Practices?

Responsible AI requires active security controls. Key measures to confirm include:

Encryption : Encrypt data at rest and in transit to limit exposure if systems are compromised.

Access Controls : Enforce least‑privilege access and track who can view or change data and models.

Regular Audits : Periodic security and compliance audits catch gaps and demonstrate accountability.

These practices reduce risk and make it easier to comply with internal policies and external regulations.

How to Assess Scalability and Integration Capabilities of AI Solutions?

You want AI that grows with you and plugs into existing systems without creating brittle integrations. Focus on architecture and interoperability when evaluating vendors.

Open APIs : Prefer solutions with well‑documented APIs that integrate with your stack.

Flexible Architectures : Look for modular designs that support incremental rollout and future enhancements.

These traits make deployments predictable and reduce rework as needs change.

Why Are Open APIs and Flexible Architectures Important?

Open APIs let you reuse existing systems and data while avoiding vendor lock‑in. Flexible architectures let you update components independently, add capabilities, and scale capacity as demand grows. Together they protect your investment and simplify operations.

How to Ensure Seamless Integration with Existing Systems?

To integrate smoothly, use pragmatic steps:

Assess Compatibility : Map the vendor’s interfaces to your systems and identify gaps early.

Plan for Integration : Define clear milestones, test environments, rollback plans, and responsibilities before you start.

A staged integration plan reduces downtime and helps teams adopt the new capability faster.

What Are the True Costs, ROI, and Pricing Models of AI Automation Partners?

Pricing and ROI are often more complex than the sticker price. To make sound financial decisions, insist on transparency and measurable outcomes.

Transparent Pricing Models : Look for clear, itemized pricing that separates one‑time work from ongoing costs.

ROI Measurement : Define the metrics you’ll use to track value: revenue impact, efficiency gains, error reduction and baseline current performance.

This approach helps you compare vendors on apples‑to‑apples terms and assess whether an investment pays off.

A robust evaluation of AI investments requires a framework that captures both direct and indirect returns.

Evaluating AI Effectiveness and ROI for Automation A practical ROI framework weighs tangible savings and productivity gains alongside harder‑to‑measure benefits. That full view clarifies which projects justify investment and where automation delivers strategic advantage. ROI of AI: Effectiveness and measurement, 2021

How to Identify Hidden Costs and Transparent Pricing Models?

Hidden costs can erode projected returns. Common items to surface during negotiations include:

Implementation Fees : One‑time integration or customization charges that may not be in the initial quote.

Maintenance Costs : Ongoing platform, support, and update expenses.

Training Expenses : Fees and time required to train staff and embed new workflows.

Make these costs explicit up front so you can model total cost of ownership and compare vendors fairly.

What Frameworks Help Measure AI Automation ROI Effectively?

Use established frameworks to quantify impact and track progress. Two practical options are:

Cost-Benefit Analysis : Compare implementation and operating costs against projected savings and revenue gains.

Balanced Scorecard : Track financial, customer, process, and learning metrics to get a broader picture of value.

These frameworks give you repeatable ways to evaluate projects and make investment decisions more objective.

Why Are Ethical AI, Transparency, and Post-Implementation Support Vital?

Responsible practices and reliable support are essential for long‑term adoption. Choose partners who commit to ethical standards and stand by outcomes with training and sustained support.

Responsible AI Principles : Vendors should demonstrate transparency, bias mitigation, and accountability in their solutions.

Post-Implementation Support : Ongoing training, monitoring, and maintenance are what turn pilots into lasting capability.

These commitments protect users, customers, and the business as models move into production.

What Responsible AI Principles Should Your Partner Follow?

Look for partners that operationalize responsible AI through concrete practices:

Transparency : Clear explanations of model behavior, data sources, and decision logic.

Fairness : Active steps to identify and reduce bias in models and datasets.

Human Oversight : Defined processes for human review and escalation when models affect decisions.

These elements help you maintain trust and meet ethical and regulatory expectations.

How Does Ongoing Training and Support Influence AI Adoption?

Training and post‑launch support determine whether teams use AI effectively. Continuous education, documentation, and responsive vendor support reduce friction, build confidence, and ensure the system delivers sustained value.

Frequently Asked Questions

How to choose a consulting partner for building AI-powered business workflows?

Partnering with the right AI consulting firm is crucial for enhancing your workflows for improved efficiency and increased revenue. The ideal partner should possess industry expertise, technical proficiency, a proven track record, and a commitment to ethical, customized solutions.

What are the common pitfalls to avoid when selecting an AI automation partner?

Common mistakes include proceeding without clear objectives, skipping technical due diligence, and underestimating data governance needs. Cultural fit and communication style also matter; ensure the partner’s ways of working complement your team. Thorough references, case study review, and pilot projects help avoid these traps.

How can businesses ensure that their AI partner aligns with their long-term goals?

Make alignment explicit: share your strategy, define success metrics, and include flexibility in the contract for evolving priorities. Regular performance reviews and joint roadmaps keep the partnership focused on long‑term outcomes rather than short‑term features.

What role does user feedback play in the selection of an AI partner?

User feedback reveals how solutions perform in daily use and how well vendors support adoption. Ask for customer references and probe for post‑implementation support experiences as this is often where real value or problems surface.

How can organizations assess the ethical standards of potential AI partners?

Request documented policies, evidence of bias testing, and examples of how the vendor explains model decisions. Certifications, audit reports, and clear escalation paths for ethical concerns are strong signals of maturity.

What are the implications of poor data governance in AI partnerships?

Weak governance can cause compliance failures, unreliable models, and reputational harm. It increases the chance of biased outputs and legal exposure. Strong governance frameworks protect data integrity, ensure traceability, and limit business risk.

How can businesses measure the success of their AI automation initiatives?

Define KPIs tied to business outcomes: cost reduction, error rates, throughput, customer satisfaction and baseline current performance. Combine quantitative tracking with qualitative user feedback and regular reviews to iterate on the solution.

Conclusion

Selecting the right AI automation partner takes clarity, technical scrutiny, and a focus on security, scalability, and ethics. Use the frameworks and checks above to compare vendors objectively, reduce risk, and ensure your investments translate into real business outcomes. When you’re ready, lean on experienced advisors and pilot projects to validate assumptions before scaling. Reach out to ThePowerLabs to start a discussion today!